Text Extraction and Recognition using Matlab

₹3,000.00

Huge Price Drop : 50% Discount

Source Code + Demo Video

100 in stock

Description

ABSTRACT

This paper presents a Text Extraction and detection method, and to improve overall system performance and reliability, proposes some detection algorithms The project presents license plate recognition system using connected component analysis and template matching model for accurate identification. Automatic Text recognition is the extraction from an image. The system model uses already captured images for this recognition process… After character recognition, an identified group of characters will be compared with database number plates for authentication. The proposed model has low complexity and less time consuming in terms of number text segmentation and character recognition. This can improve the system performance and make the system more efficient by taking relevant samples. at the same time compared their advantages and disadvantages, which provide the basis for text recognition.

INTRODUCTION

At present, under the conditions of the socialist market economy, personnel, material and financial flow dramatically, therefore, the frequency of criminal, economic crime and emergent events has increased significantly in contrast with those in the planned economy period. The occurrence characteristics of these cases include that they involve a wide range and high frequency, moreover, the mobility of the criminals is high and their means become more modem Nowadays all over digitization technology is used. Text Recognition usually abbreviated to OCR, involves a computer system designed to translate images of typewritten text (usually captured by a scanner) into machine editable text or to translate pictures of characters into a standard encoding scheme representing them. OCR began as a field of research in artificial intelligence and computational vision[26]. Text Recognition used in official task in which the large data have to type like post offices, banks, colleges etc., in real life applications where we want to collect some information from text written image. People wish to scan in a document and have the text of that document available in a .txt or .docx format..

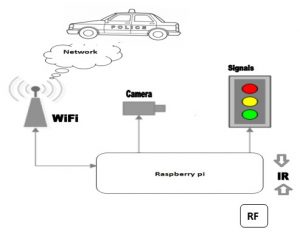

SYSTEM STRUCTURE

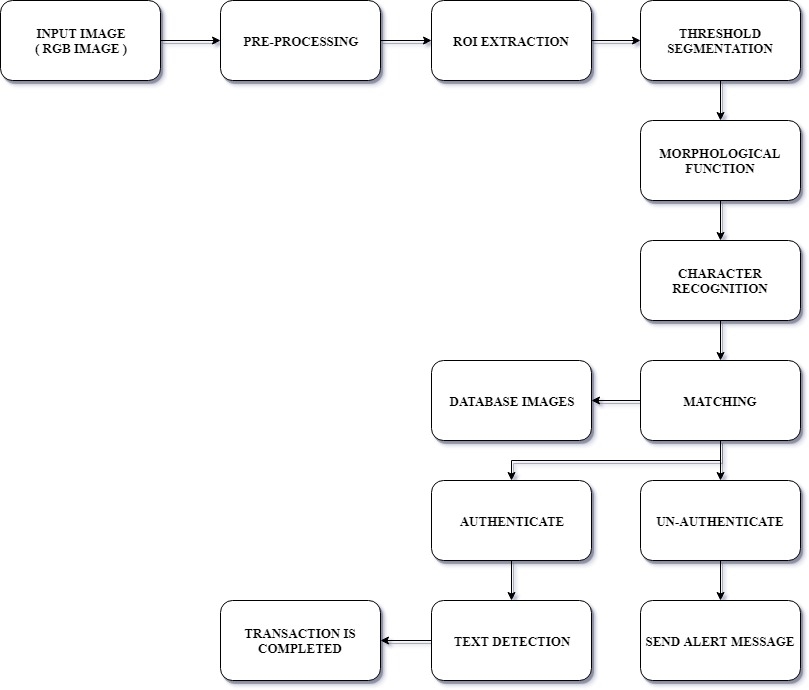

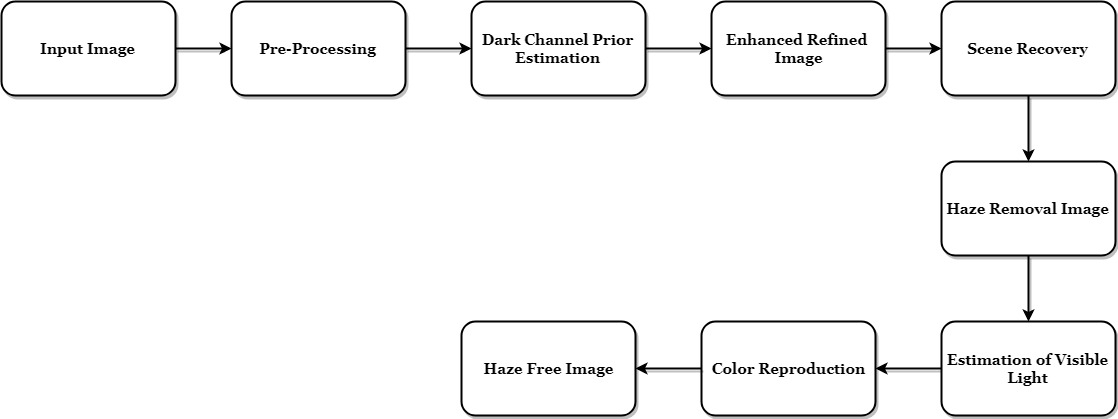

Text extraction and recognition process comprises of five steps namely text detection, text localization, text tracking, segmentation or binarization, and character recognition. Architecture of text extraction process can be visualized in Fig. 2 Text Detection: This phase takes image or video frame as input and decides it contains text or not. It also identifies the text regions in image. Text Localization: Text localization merges the text regions to formulate the text objects and define the tight bounds around the text objects. Text Tracking :This phase is applied to video data only. For the readability purpose, text embedded in the video appears in more than thirty consecutive frames. Text tracking phase exploits this temporal occurrences of the same text object in multiple consecutive frames. It can be used to rectify the results of text detection and localization stage. It is also used to speed up the text extraction process by not applying the binarization and recognition step to every detected object. Text Binarization: This step is used to segment the text object from the background in the bounded text objects. The output of text binarization is the binary image, where text pixels and background pixels appear in two different binary levels. Character Recognition: The last module of text extraction process is the character recognition. This module converts the binary text object into the ASCII text. Text detection, localization and tracking modules are closely related to each other and constitute the most challenging and difficult part of extraction process.

BLOCK DIAGRAM

PREPROCESSING

A color image is first converted to the grayscale image. The morphological operations such as the close and open operations are then performed. The size of the structure element (SE) is 4 x 4. It was determined by considering the sizes of characters and numbers in the plate. If this size is too small, the overall noise level will be larger, and if too large, the entire image will be blurred.shows an image that contains many strong edges. show the results after open and close operations, respectively. After the open operation is performed by erosion-dilation operations, black characters and numbers in the plate regions are emphasized. Moreover, through the close operation such as dilation-erosion operations, the characters and numbers in the plate regions are disappear.

ROI VERIFICATION PROCESS

The plate region verification is processed by two methods. The first method uses the aspect ratios of the possible plate regions. The second method uses the vertical edge distribution of the possible plate regions. Determination by Aspect Ratio The real size of the plate is fixed. It is always either 520mm x 110mm or 335mm x 155mm. The aspect ratios for plates are 520/110=4.73, 335/155=2.16 and the range of suitable ratio is 2 ≤ Real Aspect Ratio ≤ 5 in real image. In the case of the aspect ratio in binary ROI map, it ranges from 0.66 to 1.66, approximately 1 to 2. Then, it is available to determine whether these regions are the plate regions or not. Determination by Vertical Edge After the candidate region is determined by the aspect ratio feature, those regions are selected from the original image. The characters and numbers in the real plates usually have strong vertical edges. Therefore, the vertical edges using vertical Sobel mask are calculated. Those vertical edges are projected on the vertical plane to compute the edge distribution. The vertical edges in the plate regions are concentrated in the center of the vertical plane, while the vertical edges in other regions are existed randomly. To find the higher edge value in the center, the license plate region can be determined by sum of multiplying the normalized edge histogram value with weighting coefficient value

The proposed method in various environments.,. The accuracy of the proposed method is 90%, 40% in the complicated parking lot and cars on the road, respectively. The proposed method has the better performance than the conventional method in the situation that the edges of background are complicated shown in Fig.9. The conventional method has an error to detect the vertical edges in the background and cars as well as the edges in the plate region. The proposed method detects the exact plate region even if the edges of background are strong.

The ROI map is made by using the standard deviation of morphological open and close images, and the threshold value is calculated using the distribution of the ROI map to effectively detect the candidate region. After detecting candidate regions, those are verified using the features of the license plate. Experimental results show that the proposed method has the higher detection rate then to conventional method by 4-7% in the complex environments.

SOFTWARE REQUIREMENT

- MATLAB 7.14

CONCLUSION

In this paper an algorithm is proposed for solving the problem of offline character recognition. We had given the input in the form of images. The algorithm was trained on the training data that was initially present in the database. Preprocessing,segmentation and detect the line is done. The paper presents a brief survey of the applications in various fields along with experimentation into few selected fields. The proposed method is extremely efficient to extract all kinds of bimodal images including blur and illumination. The paper will act as a good literature survey for researchers starting to work in the field of optical character recognition

REFERENCES

[1] Ramanathan. R. et al., “A Novel Technique for English Font Recognition Using Support Vector Machines”, in Advances in Recent Technologies in Communication and Computing, Kottayam, Kerala, 2009, pp. 766-769.

[2] Line Eikvil, “Optical Character Recognition”, NorskRegnesentral, Oslo, Norway, Rep. 876, 1993.

[3] M Usman Raza, et al., “Text Extraction Using Artificial Neural Networks”, in Networked Computing and Advanced Information Management (NCM) 7th International Conference, Gyeongju, North Gyeongsang, 2011, pp. 134-137.

[4] Bertolami, Roman; Zimmermann, Matthias andBunke, Horst, ‘Rejection strategies for offline handwritten text line recognition’, ACM Portal, Vol. 27, Issue. 16, December 2006

[5] C.P. Sumathi, T. Santhanam, G.Gayathri Devi, “A Survey On Various Approaches Of text Extraction InImages”, International Journal of Computer Science &Engineering Survey (IJCSES). Vol.3, August 2012, Page no. 27-42.

[6] Datong Chen, Juergen Luettin, Kim Shearer, “A Survey of Text Detection and Recognition in Images andVideos”. Institute Dalle Molle d’Intelligence ArtificiellePerceptive Research Report, August 2000, Page no. 00-38.

[7] Xu-Cheng Yin, Xuwang Yin, Kaizhu Huang, and HongWei Hao, “Robust Text Detection in Natural Scene Images”, IEEE transaction on Pattern Analysis AndMachine Intelligence, 2013, Vol. 36, Page no. 970 – 983.

[8] K. Wang, B. Babenko, and S. Belongie, “End-to-end scene text recognition”. International conference on computer vision ICCV 2011, vol. 10, Page no.1457 – 1464.

[9] Xiaobing Wang, Yonghang Song, Yuanlin Zhang,“Natural scene text detection in multi-channel connected component segmentation”, 12th International conf. onDocument Analysis and Recognition, pp. 1375- 1379, 2013.

[10] Shehzad Muhammad Hanif, Lionel Prevost, “TextDetection and Localization in Complex Scene Images using Constrained AdaBoost Algorithm”, 10thInternational Conference on Document Analysis and Recognition, pp.1-9, 2009.

Reviews

There are no reviews yet.