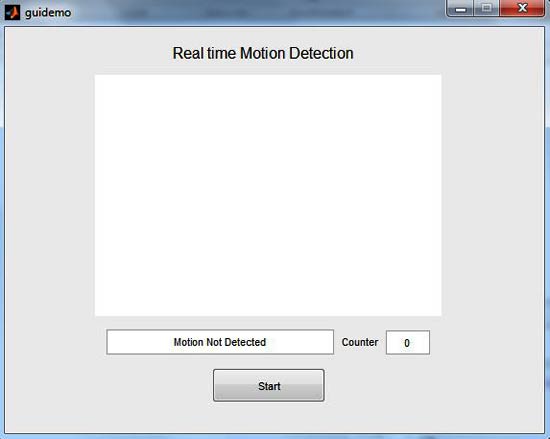

Matlab code for Real Time Motion Detection

₹3,000.00

Huge Price Drop : 50% Discount

Source Code + Demo Video

100 in stock

Description

INTRODUCTION

In video surveillance, video signals from multiple remote locations are displayed on several TV screens which are typically placed together in a control room. In the so-called third generation surveillance systems (3GSS), all the parts of the surveillance systems will be digital [I], and consequently, digital video will be transmitted and processed. Additionally, in 3GSS some ‘intelligence’ has to be introduced to detect relevant events in the video signals in an automatic way. This allows filtering of the irrelevant time segments of the video sequences and the displaying on the TV screen only those segments that require the attention of the surveillance operator. Motion detection is a basic operation in the selection of significant segments of the video signals. Once motion has been detected, other features can be considered to decide whether a video signal has to be presented to the surveillance operator. If the motion detection is performed after the transmission of the video signals from the cameras to the control room, then all the bit streams have to be previously decompressed; this can be a very demanding operation, especially if there are many cameras in the surveillance system. For this reason, it is interesting to consider the use of motion detection algorithms operating in the compressed (transform) domain.

In this thesis we present a motion detection algorithm in the compressed domain with a low computational cost. In the following Section, we assume that video is compressed by using motion JPEG (MJPEG), is. each frame is individually JPEG compressed.

Motion detection from a moving observer has been a very important technique for computer vision applications. Especially in recent years, for autonomous driving systems and driver supporting systems, vision-based navigation method has received more and more attention worldwide [1]-[3]. One of its most important tasks is to detect the moving obstacles like cars, bicycles or even pedestrians while the vehicle itself is running in a high speed. Methods of image differencing with the clear background or between adjacent frames are well used for the motion detection. But when the observer is also moving, which leads to the result of continuously changing background scene in the perspective projection image, it becomes more difficult to detect the real moving objects by differencing methods. To deal with this problem, many approaches have been proposed in recent years. Previous work in this area has been mainly in two categories: 1) Using the difference of optical flow vectors between background and the moving objects, 2) calibrating the background displacement by using camera’s 3D motion analysis result. Methods developed in Reference [4] and [11] calculate the optical flow and estimate the flow vector’s reliability between adjacent frames. The major flow vector, which represents he motion of background, can be used to classify and extract the flow vectors of the real moving objects. However, by reason of its huge calculation cost and its difficulty for determining the accurate flow vectors, it is still unavailable for real applications. To analysis the camera’s 3D motion and calibrate the background is another main method for moving objects detection. For on-board camera’s motion analysis, many motion-detecting algorithms have been proposed which always epend on the previous recognition results like road lane-marks and horizon disappointing [2][10]. These methods show some good performance in accuracy and efficiency because of their detailed analysis of road structure and measured vehicle locomotion, which is, however, computationally expensive and over-depended upon road features like lane-marks, and therefore lead to unsatisfied result when lane mark is covered by other vehicles or not exist at all.Compare with these previous works, a new method of moving objects detection from an on-board camera is presented in this paper. To deal with the background-change problem, our method uses camera’s 3D motion analysis results to calibrate the background scene. With pure points matching and the introduction of camera’s Focus of Expansion (FOE), our method is able to determine camera’s rotation and translation parameters theoretically by using only three pairs of matching points between adjacent frames, which make it faster and more efficient for real-time applications. In section 2, we will interpret the camera’s 3D-motion analysis with the introduction of FOE. Then, the detailed image processing methods for moving objects detection are proposed in section 3 and section 4 which include the corner extraction method and a fast matching algorithm. In section 5, experimental results on real outdoor road image sequence will show the effectiveness and precision of our approach.

DEMO VIDEO

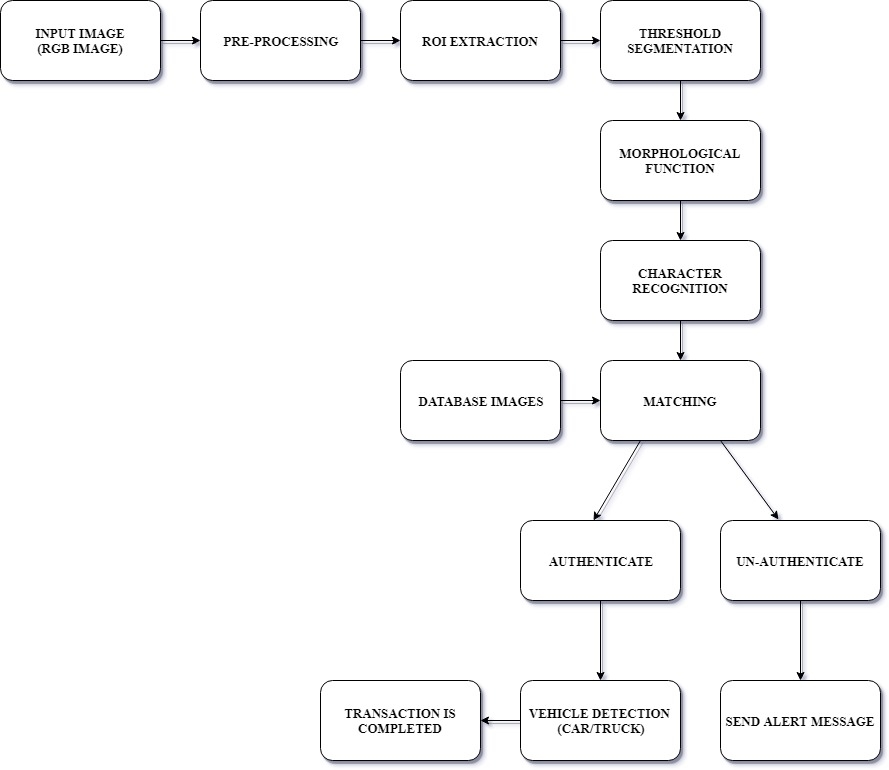

FLOW CHART

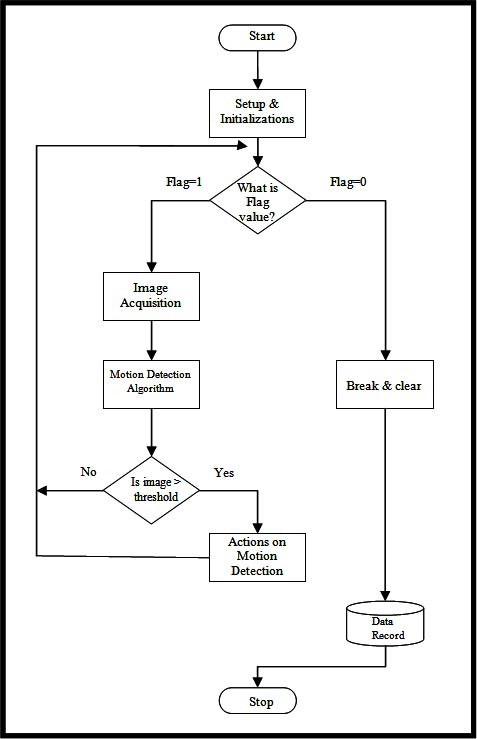

The main task of the software was to read the still images recorded from the camera and then process these images to detect motions and take necessary actions accordingly. Figure 5.1 below shows the general flow chart of the main program.

Figure 5.1 Main Program Flow Diagram

It starts with general initialization of software parameters and objects setup. Then, once the program started the flag value which indicates whether the stop button was pressed or not is checked. If the stop button was not pressed it start reading the images then process them using one of the two algorithms as the operator was selected. If a motion is detected it starts a series of actions and then it go back to read the next images, otherwise it goes directly to read the next images. Whenever the stop button is pressed the flag value will be set to zero and the program is stopped, memory is cleared and necessary results are recorded. This terminates the program and returns the control for the operator to collect the results.

The next sections explain each process of the flow chart in figure 5.1 with some details.

Motion Detection Using Sum of Absolute Difference (SAD)

This algorithm is based on image differencing techniques. It is mathematically represented using the following equation

Where is the number of pixels in the image used as scaling factor,

I(ti) is the image I at time i ,

I(ti) is the image I at time j and

D(t) is the normalized sum of absolute difference for that time.

In an ideal case when there is no motion

I(ti) = I(ti) and D(t). However noise is always presented in images and a better model of the images in the absence of motion will be I(ti) = I(tj) + n(p)

Where n(p) is a noise signal.

The value D(t) that represents the normalized sum of absolute difference can be used as a reference to be compared with a threshold value as shown in figure 5.6 below.

Reviews

There are no reviews yet.