Image Fusion based on Convolutional Neural Network using OpenCV, Python

Call for Price

Satellite Image Analysis Using Convolutional Neural Network

Description

ABSTRACT

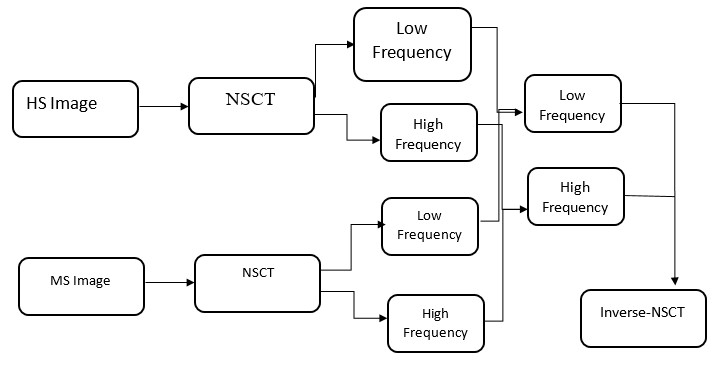

Multimodal Satellite image fusion is effectuated to minimize the redundancy while augmenting the necessary information from the input images acquired using different medical imaging sensors. The sole aim is to yield a single fused image, which could be more informative for an efficient clinical analysis. This paper presents multimodal fusion framework using the non sub-sampled Contour let transform (NSCT) domains for images acquired using two distinct Hyper Spectral and Multi Spectral Images. The major advantage of using NSCT is to improve upon the shift variance, directionality, and phase information in the finally fused image. The first stage employs a NSCT domain for fusion and then second stage to enhance the contrast of the diagnostic features by using Guided filter. A quantitative analysis of fused images is carried out using dedicated fusion metrics. The fusion responses of the proposed approach are also compared with other state-of-the-art fusion approaches; depicting the superiority of the obtained fusion results.

EXISTING SYSTEM

- Image averaging and maximization method

- Principal component analysis

- Discrete Cosine Transform

PROPOSED SYSTEM

- Multimodal medical image fusion based on Non subsample contour let transform

- Guided Filter

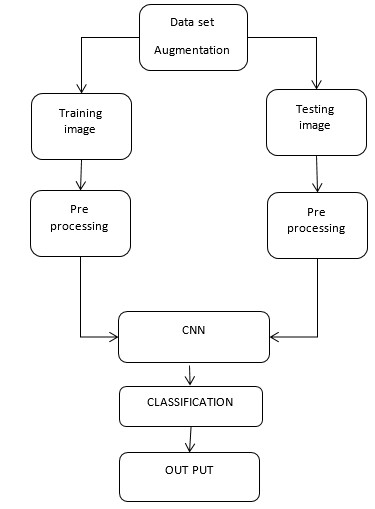

- CNN

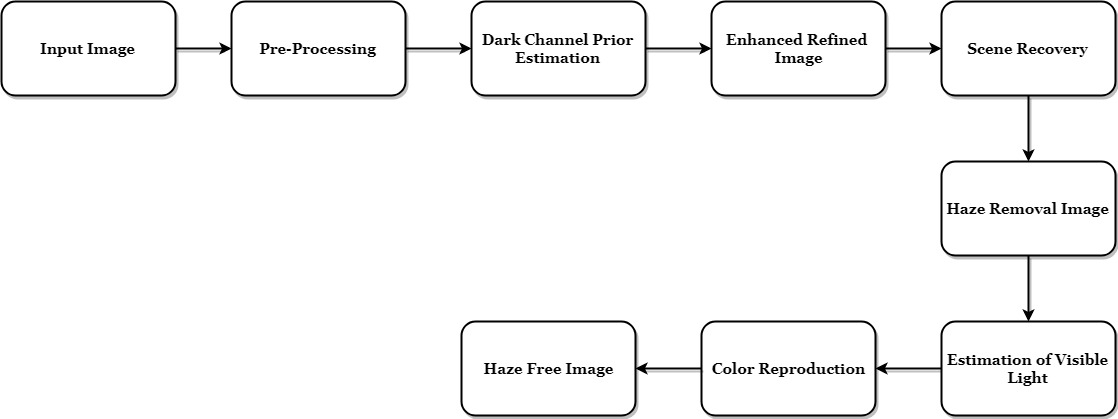

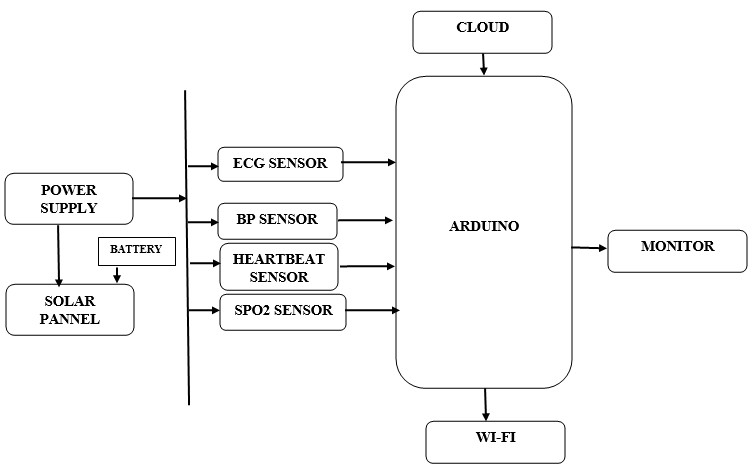

BLOCK DIAGRAM

METHODOLOGIES

PRE-PROCESSING

- NSCT based Multistage decomposition

- Pixel Level Fusion

- Guided Filter

- Performance Analysis

- CNN

APPLICATIONS

- Satellite Application

SOFTWARE

- MATLAB

REFERENCES

[1] V. Ferraris, N. Dobigeon, Q. Wei, and M. Chabert, “Change detection between multi-band images using a robust fusion-based approach,” in Proc. IEEE Int. Conf. Acoust., Speech and Signal Process. (ICASSP), New Orleans, LA, 2017.

[2] J. B. Campbell and R. H. Wynne, Introduction to remote sensing, 5th ed. New York: Guilford Press, 2011.

[3] A. Singh, “Review Article Digital change detection techniques using remotely-sensed data,” Int. J. Remote Sens., vol. 10, no. 6, pp. 989– 1003, June 1989.

[4] F. Bovolo and L. Bruzzone, “The time variable in data fusion: A change detection perspective,” IEEE Geosci. Remote Sens. Mag., vol. 3, no. 3, pp. 8–26, Sept. 2015.

[5] M. Dalla Mura, S. Prasad, F. Pacifici, P. Gamba, J. Chanussot, and J. A. Benediktsson, “Challenges and Opportunities of Multimodality and Data Fusion in Remote Sensing,” Proc. IEEE, vol. 103, no. 9, pp. 1585–1601, Sept. 2015.

[6] D. Landgrebe, “Hyperspectral image data analysis,” IEEE Signal Process. Mag., vol. 19, no. 1, pp. 17–28, 2002

Additional information

| Weight | 0.000000 kg |

|---|

Reviews

There are no reviews yet.