AI -Snake game design using Machine Learning

₹5,000.00 Exc Tax

AI -Snake game design using Machine Learning

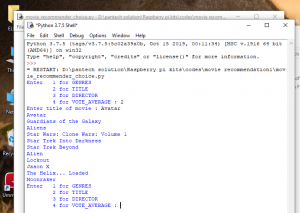

Platform : Python

Delivery Duration : 3-4 Working days

100 in stock

Description

ABSTRACT

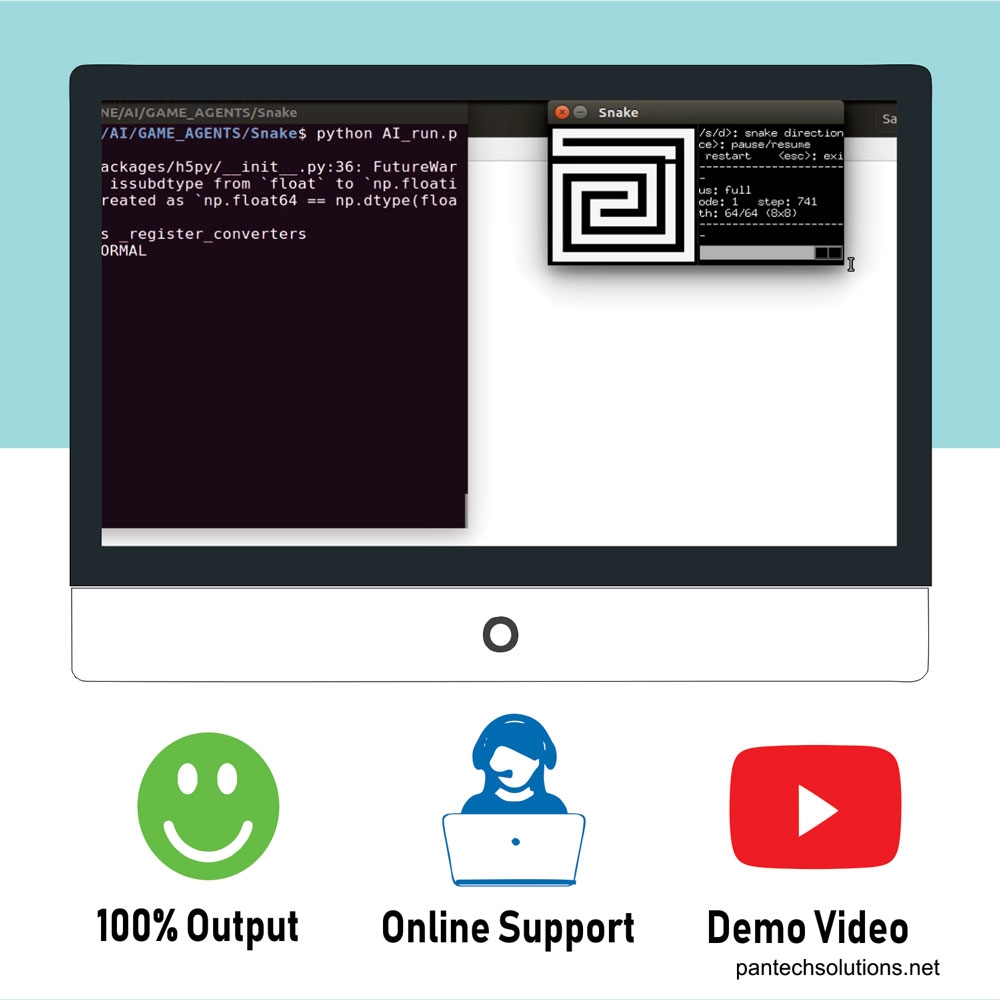

Methods in the domain of artificial intelligence (AI) have been applied to develop agents capable of playing a variety of games. Snake game is a computer game, whose goal is to control a snake to move and collect foods in the map. The single-player variant of Snake is a well-known and popular video game that requires a player to navigate a line-based representation of a snake through a two-dimensional playing area, while avoiding collisions with the walls of the playing area and the body of the snake itself. A score and the snake length are increased whenever the snake is moved through items representing food. The game thus becomes more challenging as the score increases. The application of AI techniques to playing the game of Snake has not been very well explored. In this project we work on techniques which are empirically compared against three Snake playing agents in terms of several performance measures(GREEDY, Hamilton, and DQN).

DEMO VIDEO

INTRODUCTION

Snake game is a ideal computer game, in which we control a snake to move around and collect food in a map. In this paper a controller is developed using artificial intelligence (AI) by using movement rating functions and evolutionary algorithms (EA). Before elaborating how to design the controller, the snake game which is implemented and the objective defined will be explained initially.

In the game , the snake is allowed to pass through all the area around a 2-Dimensional playing field (i.e.)game map which is surrounded by walls. At each distinct interval (a time step), the snake should move forward, turn left, turn right, as the snake requires and the snake cannot stop moving. The game will be generated randomly and a piece of food will be placed anywhere in the map , whenever there is no food on the map. When the snake moves towards the food and if the food is eaten then the length of the snake will be increased by one. The goal of the game is to eat as many food without getting collide to the wall or by itself. The objective of the game is to maximize the score. score. The above-mentioned simple strategy may keep the snake alive, but without moving toward the apples efficiently it cannot get a high score. Thus, it is needed to be designed with more intelligent controller, which is the topic of this paper.

LITERATURE SURVEY

EAs have shown many successful applications to design AI or generate contents for computer games. A useful paradigm is to use EAs to optimize the parameters of the controller/game. Gallager and Ryan proposed a rule-based controller for playing the Ms. Pacman game. It is a game about moving a character (Ms. Pacman) to collect pills and to escape from ghosts in a maze. The controller first determines its state, explore or retreat, based on the distance between Pacman and the ghost. Then, it determines the new direction of movement based on the type of its location (e.g. corridor or intersection) probabilistically. The distance threshold and the probabilities form a set of 85 parameters of the controller. An evolution strategy (ES) was applied to optimize values of these parameters.

Cole et al. designed an AI controller for Counter Strike, a popular first-person shooting (FPS) game. In this game, human and computer players equip themselves with different types of weapons (e.g. AK-47 or sniper rifle) to complete specified missions (e.g. defuse bombs). Behaviors of controllers depend on the preference of weapons and the aggressivity (how aggressive the player is). Values of these parameters were optimized by a genetic algorithm (GA). Their experiments and results showed that the GA-assisted players perform similarly to human-tuned players.

Bohm et al solved the tetris game by EA-based optimization and rating functions. Tetris is a block falling game, in which players need to put a series of blocks of different shapes properly to get high scores. Böhm et al. evaluated different positions of placing blocks by many criteria such as pile height and removed lines. These criteria were aggregated, and the best position was determined by the aggregated value. The EA was responsible for optimizing the weight values. The framework of our controller is similar to theirs.

PROPOSED SYSTEM

In this game settings , the game map will be 8 units high and 8 units wide , which consist of 64 available spaces. The snake at first will begin at the top left corner , facing towards right , with an initial length of 4 units. Therefore the snake can eat not more than 60 pieces of food before filling up the entire map.

A) Path Solver

Some methods are provided to find the shortest path and the longest path from the snake’s head to other points on the map.

Shortest path

The breadth first search is used by the path solver to find the shortest path along the map.

Longest path

The longest path in the playing field (i.e., a cyclic, undirected and unweighted graph) is NP-hard. Path Solver uses a heuristic algorithm to find suboptimal solutions.

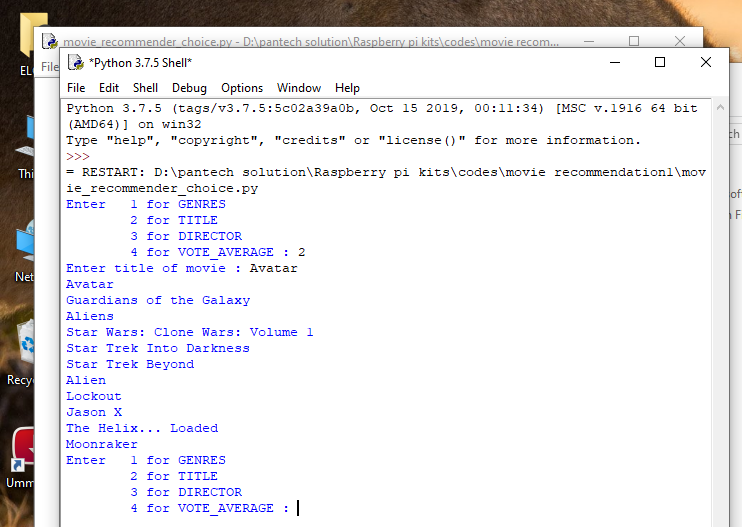

B) Greedy solver

The greedy solver will make the snake to eat the food along the shortest path , if it thinks that particular path will be safe for the snake to eat . otherwise it makes the snake to move around until it finds a safe path . since it need a paths searching , it depends on the path solver.

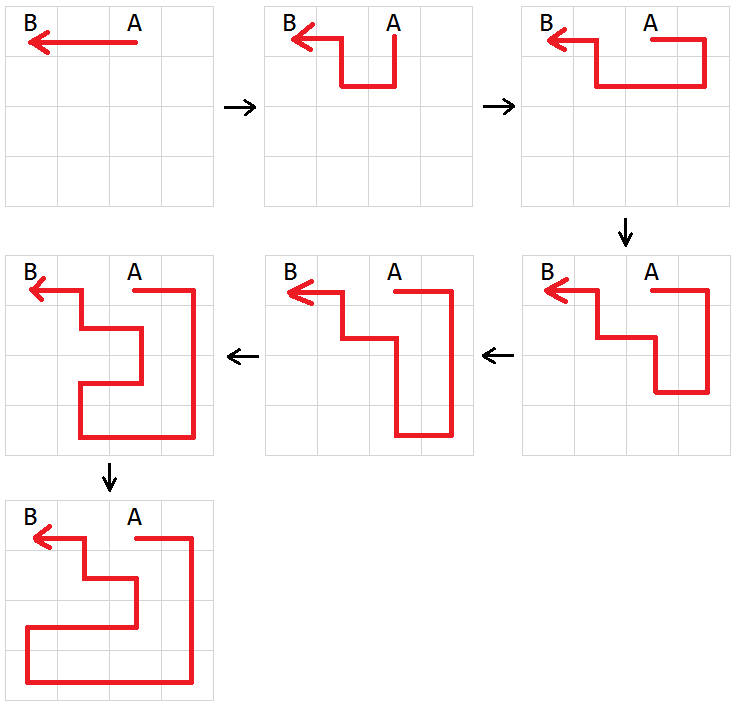

C) Hamilton solver

Initially the Hamilton Solver builds a Hamiltonian cycle on the playing field and then directs the snake to eat food along the path. To reduce the average steps the snake takes to success, it enables the snake to take shortcuts anywhere along the map if possible. This Hamiltonian solver also depends on the path solver.

D) DQN Solver

DQN Solver uses a deep reinforcement learning algorithm named Deep Q-Network[2] (DQN) to solve the problem

SOFTWARE REQUIREMENTS

- Python

- pytest

- numpy

- matplotlib

- tensorflow

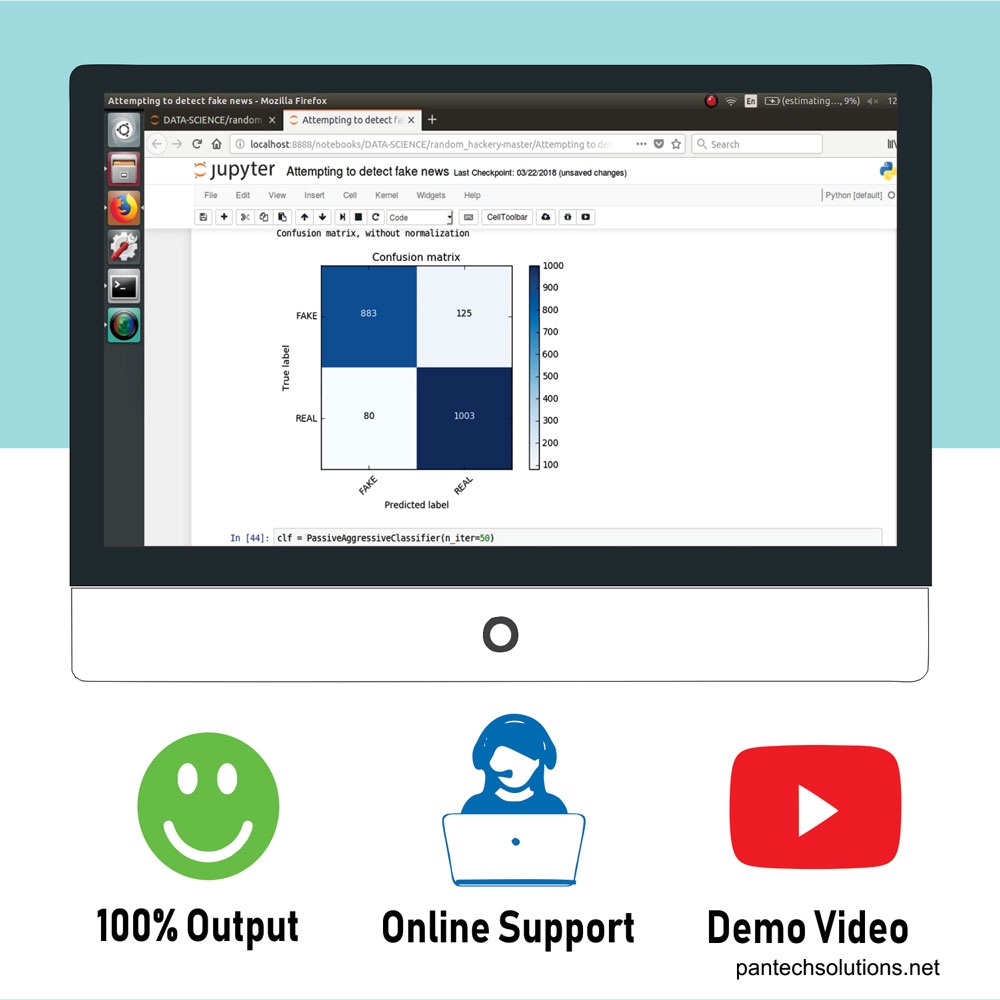

RESULTS

Greedy Solver – Results

It directs the snake to eat the food along the shortest path if it thinks the snake will be safe. Otherwise , the snake will move around all area around the playing field until it finds a safe path.

Hamiltonian cycle – Results

The Hamiltonian cycle works only when the initial positions of the snake’s head and bodies are similar to the situation when the point 0, 1 and 2 ( considering the initial head of the snake and bodies are 2,1,0 respectively). Then make the point 1 to be unreachable and generate the longest path from 2 to point 0. Finally, we join the starting point 2 and the ending point 0, which forms a Hamiltonian cycle.

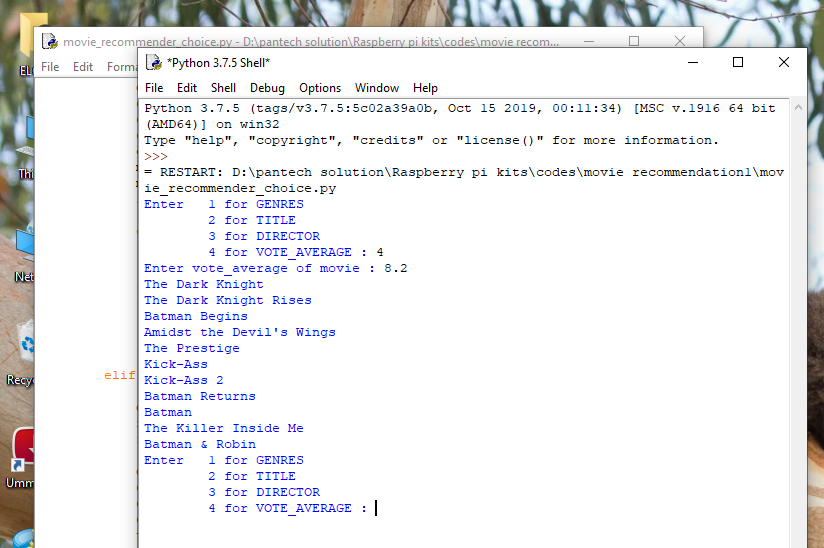

DQN-Result

The input for the network is the state of the agent while the output is the expected reward of each action the agent can take. The epsilon greedy gives the agent the chance to exploit its environment.

REFERENCES

1) Tapsell, J., Nokia 6110 Part 3 – Algorithms. (2015).

2) Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A. A., Veness, J., Bellemare, M. G., et al. (2015). Human-level control through deep reinforcement learning. Nature, 518(7540), 529-533. [Link]

3) van Hasselt, H., Guez, A., Silver, D. (2016). Deep reinforcement learning with double q-learning. Proceedings of the Thirtieth AAAI Conference on Artificial Intelligence, 2094-2100.

Reviews

There are no reviews yet.