Eyeball movement based cursor control using Raspberry Pi

Call for Price

From this project you will learn to use computer vision for detecting eyeball and tracking eyeball movement for cursor control.

Description

ABSTRACT

There are different reasons for which people need an artificial of locomotion such as a virtual keyboard. The number of people, who need to move around with the help of some article means, because of an illness. This technology makes them control everything on the computer via eyeball movement for cursor control. The camera is capturing the image of eye movement. First, detect pupil center position of the eye. Then the different variation on pupil position gets different command set for the virtual keyboard. The signals pass the motor driver to interface with the virtual keyboard itself. The motor driver will control both speed and direction to enable the virtual keyboard to move forward, left, right and stop.

INTRODUCTION

At present situation, paralyzed peoples need a guidance to do any work. One person should be there with that person to take care of him. By using the eyeball tracking mechanism, we can fix the centroid on the eye based on the centroid we need to track that paralyzed person’s eye this eyeball track mechanism involves many applications like home automation by using python GUI robotic Control and virtual keyboard application.

EXISTING SYSTEM

Matlab-based based control cursor. Eye movement-controlled wheelchair by using Raspberry Pi is an existing one that controls the wheelchair by monitoring eye movement. Matlab based cursor control system exists. Now again it designed using computer vision by python programming.

PROPOSED SYSTEM

In our proposed system the cursor movement of the computer is controlled by eye movement using Open CV. The system comprises of Raspberry heart of this system which is interfaced with USB webcam and Monitoring Unit. The camera detects the Eyeball movement which can be processed in OpenCV. For operating mouse pointers we can also use thee PyautoGUI library for cursor movement

DEMO VIDEO

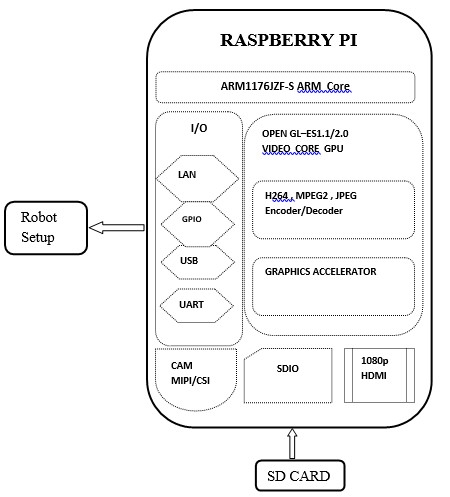

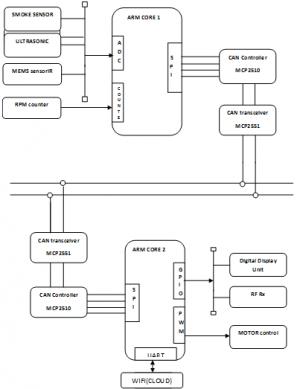

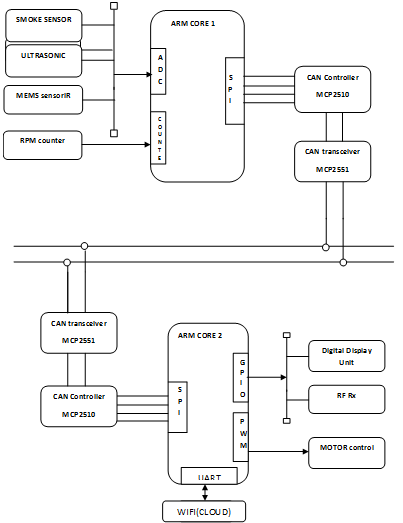

BLOCK DIAGRAM

BLOCK DIAGRAM DESCRIPTION

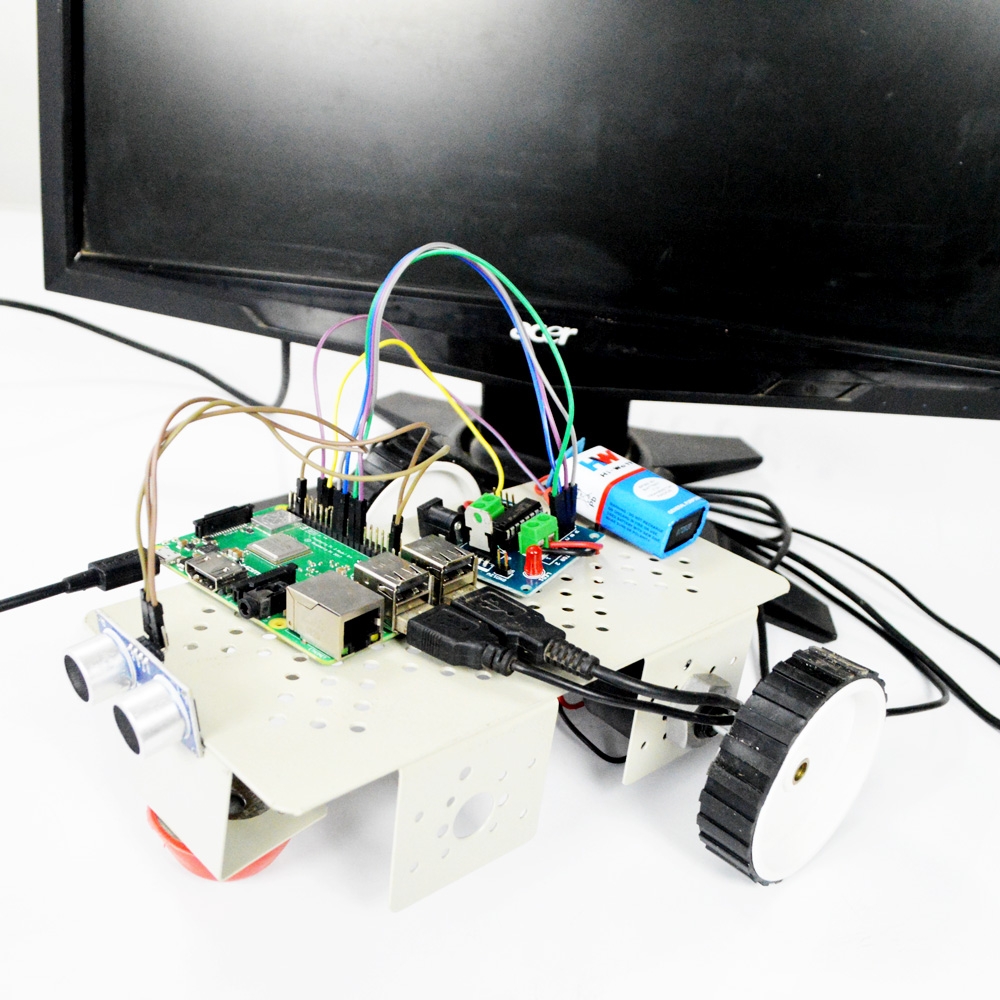

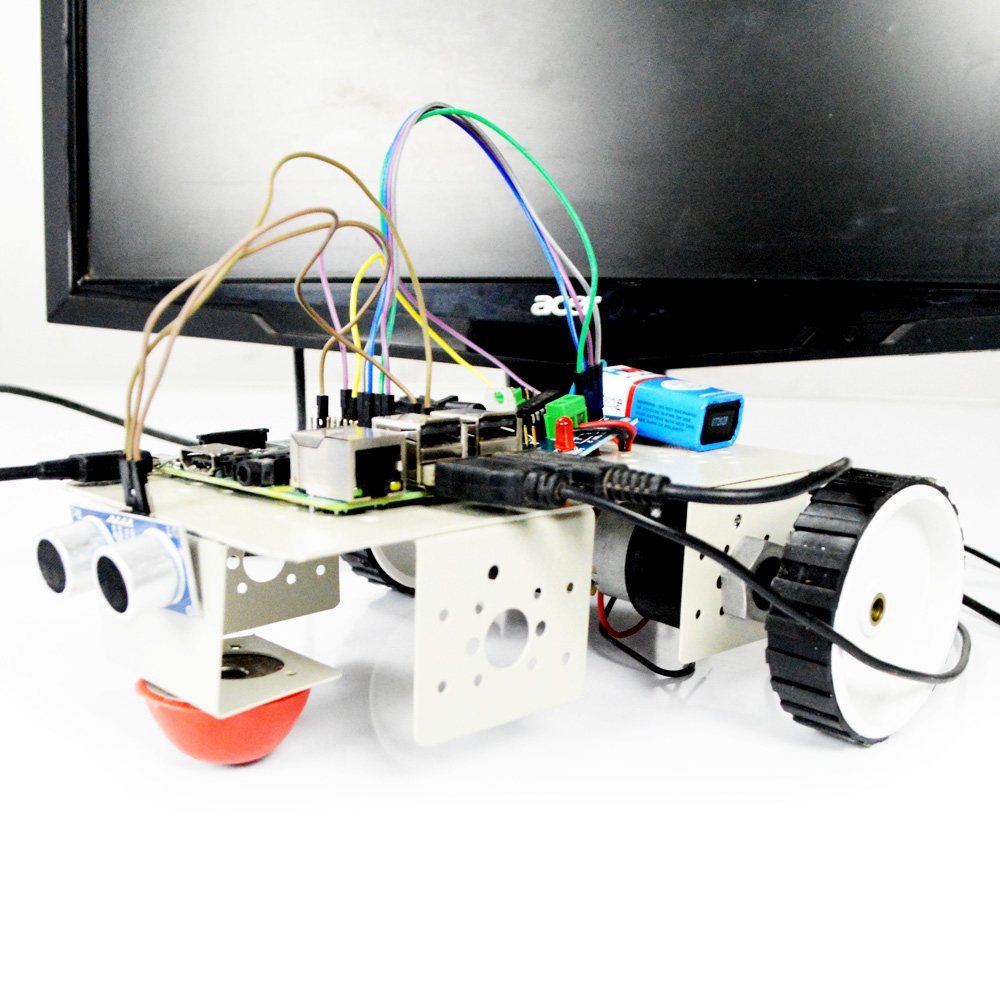

Here, in this block diagram the whole system is controlled by the Arm11 processor and this processor is implemented on Raspberry Pi Board. So this board is connected with a monitor, camera, and SD card. Those all components are connected by USB adaptors. Raspberry Pi is the key element in processing module which keeps on monitor’s eye movement by interfacing USB camera. The camera is capturing the image of eye movement. USB Cameras ideal for many imaging applications. USB Camera will be an interface with raspberry pi. Raspberry Pi will be using SD card, then the install Raspbian OS and open CV on the raspberry pi. Fist image will be captured by USB Camera. Focus on the eye in the image and detect the Centre position of the pupil by open CV code. Take the center position value of pupil as a reference, and then the next the different value of X, Y coordinates will be set for a particular command.

PROJECT DESCRIPTION

This system allows you to control your mouse cursor based on your eyeball movement. It is the initial stage of movement of the cursor, later on, it been innovated by controlling appliances using eyeball movement. The camera should be placed static at the good light intensity to increase the accuracy for detecting the eyeball movement. It’s having the structural execution like, it will detect the eyes first then it will detect the motion of the eyeball by centralizing the point on the eyeball.

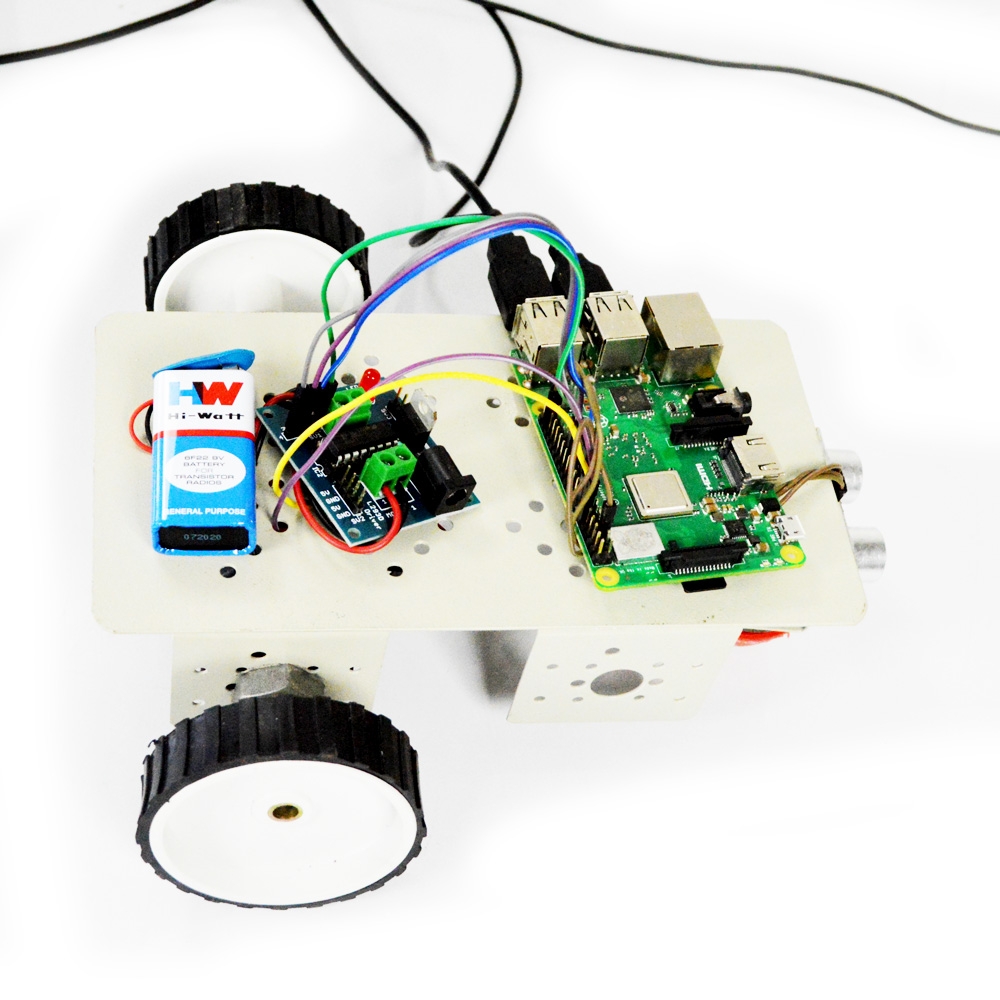

HARDWARE REQUIRED

- Raspberry Pi

- USB Camera

- Monitor

- USB adaptors

- SD card

SOFTWARE REQUIRED

- Raspbian Jessie OS

- OpenCV

- Python Language

APPLICATIONS

- BIO-GADGETS applications

ADVANTAGES

- High accuracy

- physically handicapped people can operate computers

CONCLUSION

In this research, the experimental results provide objective eye-tracking evidence that confirms the hypotheses made based on the findings of the existing research: Most students recognize beacons and pay more attention to these areas when debugging. Only significant statistical results have been reported in the conclusions, guaranteeing the conclusion validity. Previous research has revealed a relationship between working memory capacity and cognitive activities related to debugging with regard to mental arithmetic, short-term memory, logical thinking, and problem-solving. Thus, the eyeball movement tracking is applied to physically challenged peoples to obtain various results

REFERENCE

[1] D. N. Perkins, C. Hancock, R. Hobbs, F. Martin, and R. Simmons,“Conditions of learning in novice programmers,” J. Educ. Comput.Res., vol. 2, pp. 37–55, 1986.

[2] J. A. Villalobos and N. A. Calderón, “Developing programming skills by using interactive learning objects,” in Proc. ITiCSE, 2009, pp.151–155.

[3] E. Verdúet al., “A distributed system for learning programming on-line,” Comput. Educ., vol. 58, no. 1, pp. 1–10, 2012.

[4] S. Fitzgerald et al., “Debugging: Finding, fixing and flailing, a multi institutional study of novice debuggers,”Comput. Sci. Educ., vol. 18,pp. 93–116, 2008.

[5] R. T. Putnam, D. Sleeman, J. A. Baxter, and L. Kuspa, “A summary of misconceptions of high school basic programmers,” J. Educ. Comput.Res., vol. 2, pp. 459–472, 1984.

[6] S. Xu and V. Rajlich, “Cognitive process during program debugging,” in Proc. 3rd IEEE ICCI, 2004, pp. 176–182.

Additional information

| Weight | 1.000000 kg |

|---|

Reviews

There are no reviews yet.