Classification of Skin Lesion using OpenCV

₹5,000.00 Exc Tax

Classification of malignant melanoma and Benign Skin Lesion by Using Back Propagation Neural Network and ABCD Rule

Platform : Python

Delivery Duration : 3-4 working Days

99 in stock

Description

ABSTRACT

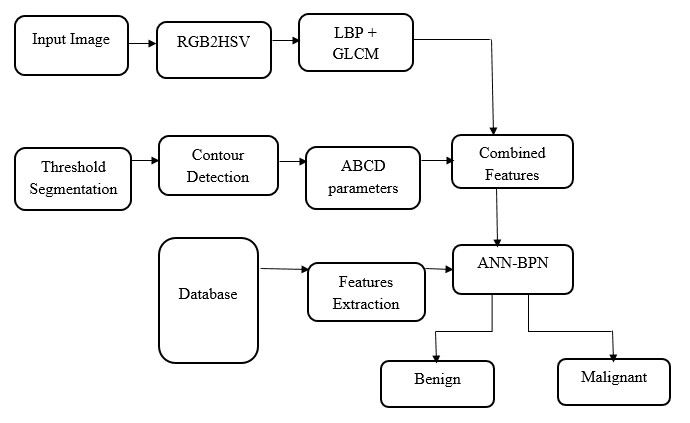

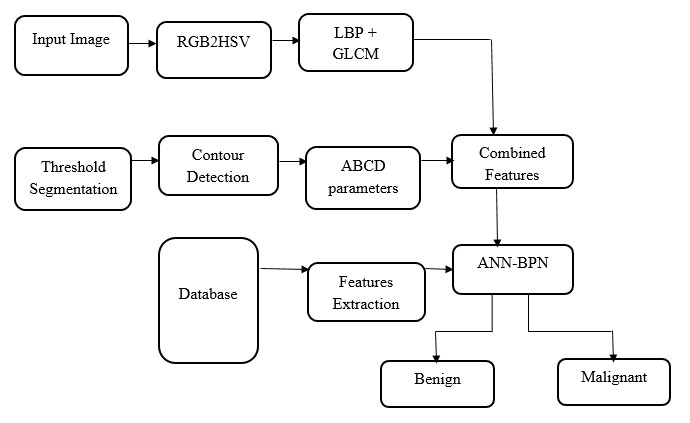

Human Cancer is one of the most dangerous disease which is mainly caused by genetic instability of multiple molecular alterations. Among many forms of human cancer, skin cancer is the most common one. To identify skin cancer at an early stage we will study and analyze them through various techniques named as segmentation and feature extraction. Here, we focus malignant melanoma skin cancer, (due to the high concentration of Melanoma- Hier we offer our skin, in the dermis layer of the skin) detection. In this, We used our ABCD rule dermoscopy technology for malignant melanoma skin cancer detection. In this system different step for melanoma skin lesion characterization i.e, first the Image Acquisition Technique, pre-processing, segmentation, define feature for skin Feature Selection determines lesion characterization, classification methods. In the Feature extraction by digital image processing method includes, symmetry detection, Border Detection, color, and diameter detection and also we used LBP for extract the texture based features. Here we proposed the Back Propagation Neural Network to classify the benign or malignant stage.

DEMO VIDEO

EXISTING METHOD

- Principal Component Analysis

- Local binary pattern and shape features

- KNN and FNN classifier

K-MEANS SEGMENTATION

K-means is one of the simplest unsupervised learning algorithms that solve the well known clustering problem. The procedure follows a simple and easy way to classify a given data set through a certain number of clusters (assume k clusters) fixed a priori. The main idea is to define k centroids, one for each cluster. These centroids shoud be placed in a cunning way because of different location causes different result. So, the better choice is to place them as much as possible far away from each other. The next step is to take each point belonging to a given data set and associate it to the nearest centroid. When no point is pending, the first step is completed and an early groupage is done. At this point we need to re-calculate k new centroids as bary centers of the clusters resulting from the previous step. After we have these k new centroids, a new binding has to be done between the same data set points and the nearest new centroid. A loop has been generated. As a result of this loop we may notice that the k centroids change their location step by step until no more changes are done. In other words centroids do not move any more. Finally, this algorithm aims at minimizing an objective function, in this case a squared error function.

DRAW BACKS OF EXISTING METHOD

- High Computational load and poor discriminatory power.

- LBP doesn’t differentiate the local texture region.

- FNN is slow training for large feature set.

- Less accuracy in classification

PROPOSED METHOD

- Skin lesion classification for Computer Aided Diagnosis (CAD) system based on,

- Hybrid features involves color features and texture descriptors

- ANN-Back Propagation Neural Network classifier

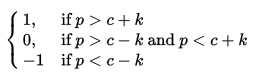

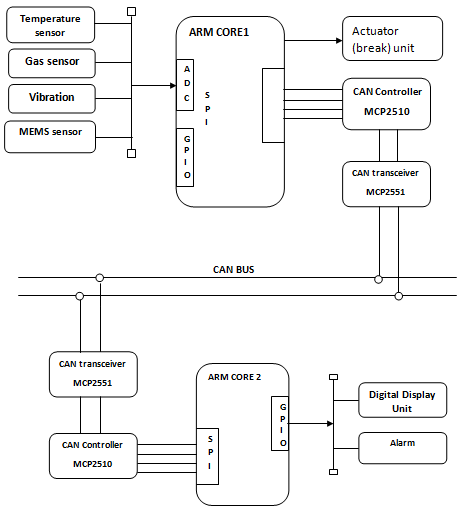

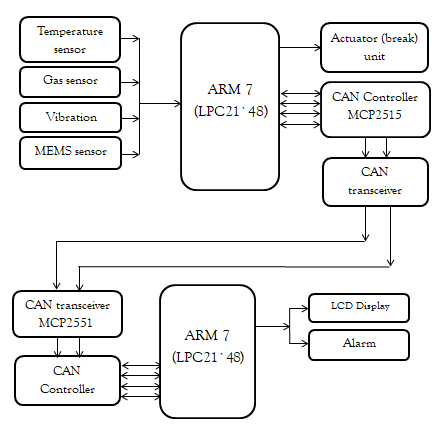

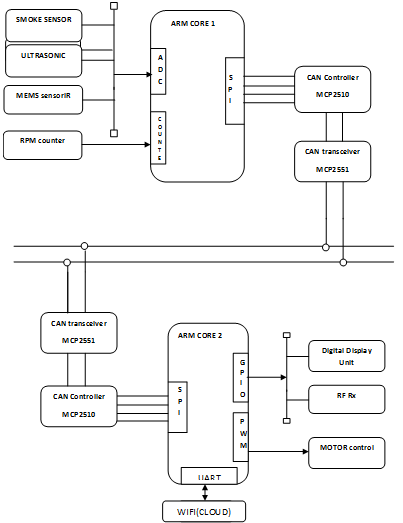

BLOCK DIAGRAM

LOCAL TERNARY PATTERN

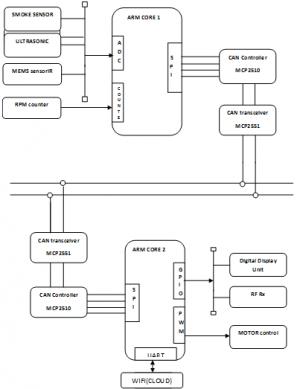

Local ternary patterns (LTP) are an extension of Local binary patterns (LBP). Unlike LBP, it does not threshold the pixels into 0 and 1, rather it uses a threshold constant to threshold pixels into three values. Considering k as the threshold constant, c as the value of the center pixel, a neighboring pixel p, the result of threshold is:

In this way, each thresholded pixel has one of the three values. Neighboring pixels are combined after thresholding into a ternary pattern. Computing a histogram of these ternary values will result in a large range, so the ternary pattern is split into two binary patterns. Histograms are concatenated to generate a descriptor double the size of LBP.

This paper proposes a navel method for extraction of features using Local Ternary Pattern (LTP) and signed bit multiplication, which uses central pixel for feature computation. The extracted features are main component of the initial set of learning images (training set).Once the features of test images are extracted, the image is classified by comparing its feature vector with other train vectors in database using ANN classifier

GRAY-LEVEL CO-OCCURRENCE MATRIX

To create a GLCM, use the graycomatrix function. The graycomatrixfunction creates a gray-level co-occurrence matrix (GLCM) by calculating how often a pixel with the intensity (gray-level) value i occurs in a specific spatial relationship to a pixel with the value j. By default, the spatial relationship is defined as the pixel of interest and the pixel to its immediate right (horizontally adjacent), but you can specify other spatial relationships between the two pixels. Each element (i,j) in the resultant GLCM is simply the sum of the number of times that the pixel with value i occurred in the specified spatial relationship to a pixel with value j in the input image. Because the processing required to calculate a GLCM for the full dynamic range of an image is prohibitive, graycomatrix scales the input image. By default, graycomatrix uses scaling to reduce the number of intensity values in gray scale image from 256 to eight. The number of gray levels determines the size of the GLCM. To control the number of gray levels in the GLCM and the scaling of intensity values, using the Num Levels and the Gray Limits parameters of the graycomatrix function. See the graycomatrix reference page for more information.

ARTIFICIAL NEURAL NETWORK

NEURAL NETWORK

Neural networks are predictive models loosely based on the action of biological neurons.

The selection of the name “neural network” was one of the great PR successes of the Twentieth Century. It certainly sounds more exciting than a technical description such as “A network of weighted, additive values with nonlinear transfer functions”. However, despite the name, neural networks are far from “thinking machines” or “artificial Brains”. A typical artifical neural network might have a hundred neurons. In comparison, the human nervous system is believed to have about 3×1010 neurons. We are still light years from “Data”.

SOFTWARE REQUIREMENTS

- Python

- Open-cv

- Numpy

RESULTS

In this system we can find the stage and the part affected in the body where the skin is affected by cancer so that based on the stages further treatments can be proceeded, it may be normal,moderate,severe based on this further clinical treatments can be proceeded

REFERENCE

[1] Hoshyar AN, Al-Jumaily A, Sulaiman R. Review on automatic early skin cancer detection. InComputer Science and Service System (CSSS), 2011 International Conference, IEEE, 2011; 4036- 4039.

[2] Loescher LJ, Janda M, Soyer HP, Shea K, CurielLewandrowski C. Advances in skin cancer early detection and diagnosis. InSeminars in oncology nursing, WB Saunders, 2013; 29(3):170-181.

[3]Almaraz-Damian JA, Ponomaryov V, Rendon-Gonzalez E. Melanoma CADe based on ABCD Rule and Haralick Texture Features. In2016 9th International Kharkiv Symposium on Physics and Engineering of Microwaves, Millimeterand Submillimeter Waves (MSMW), IEEE, 2016; 1-4.

[4] Abbas Q, EmreCelebi M, Garcia IF, Ahmad W. Melanoma recognition framework based on expert definition of ABCD for dermoscopic images. Skin Research and Technology, 2013;19(1):93-102.

[5] Mete M, Sirakov NM. Optimal set of features for accurate skin cancer diagnosis. In2014 IEEE International Conference on Image Processing (ICIP),

[6] Kasmi R, Mokrani K. Classification of malignant melanoma and benign skin lesions: implementation of automatic ABCD rule. IET Image Processing, 2016;10(6):448-55.

Reviews

There are no reviews yet.